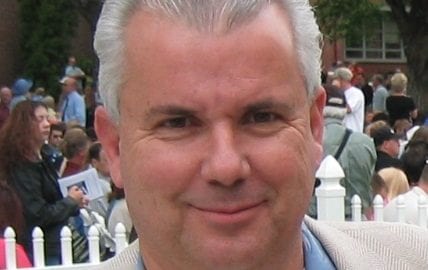

Robert Epstein, a senior research psychologist at the American Institute for Behavioral Research and Technology in California and the former editor of Psychology Today, is one of only a few scholars who have conducted empirical studies on the ability of digital platforms to manipulate opinions and outcomes. In a 2015 study, Epstein and Ronald E. Robertson reported the discovery of what they consider “one of the largest behavioral effects ever identified”: the search engine manipulation effect (SEME). Simply by placing search results in a particular order, they found, voters’ preferences can shift dramatically, “up to 80 percent in some demographic groups.”

“What I stumbled upon in 2012 or early 2013 quite by accident was a particular mechanism that shows how you can shift opinions and votes once you’ve got people hooked to the screen,” said Epstein.

While much of the political and public scrutiny of digital platforms has been focused on the behavior of bad actors like Cambridge Analytica, Esptein called these scandals a distraction, saying, “Don’t worry about Cambridge Analytica. That’s just a content provider.” Instead, he said, the power of digital platforms to manipulate users lies in the filtering and ordering of information: “It’s no longer the content that matters. It’s just the filtering and ordering.” Those functions, he noted, are largely dominated by two companies: Google and, to a lesser extent, Facebook.

SEME, said Epstein, is but one of five psychological effects of using search engines he and his colleagues are studying, all of which are completely invisible to users. “These are some of the largest effects ever discovered in the behavioral sciences,” he claimed, “but since they use ephemeral stimuli, they leave no trace. In other words, they leave no trace for authorities to track.”

Another effect Epstein discussed is the search suggestion effect (SSE). Google, Epstein’s most recent paper argues, has the power to manipulate opinions from the very first character that people type into the search bar. Google, he claimed, is also “exercising that power.”

“We have determined, through our research, that the search suggestion effect can turn a 50/50 split among undecided [voters] into a 90/10 split just by manipulating search suggestions.”

One simple way to do this, he said, is to suppress negative suggestions. In 2016, Epstein and his coauthors noticed a peculiar pattern when typing the words “Hillary Clinton is” into Google, Yahoo, and Bing. In the latter, the autocomplete suggested searches like “Hillary Clinton is evil,” “Hillary Clinton is a liar,” and “Hillary Clinton is dying of cancer.” Google, however, suggested far more flattering phrases, such “Hillary Clinton is winning.” Google has argued that the differences can be explained by its policy of removing offensive and hateful suggestions, but Epstein argues that this is but one example of the massive opinion-shifting capabilities of digital platforms. Google, he argues, has likely been determining the outcomes of a quarter of the world’s elections in recent years through these tools.

“The search engine is the most powerful source of mind control ever invented in the history of humanity,” he said. “The fact that it’s mainly controlled by one company in almost every country in the world, except Russia and China, just astonishes me.”

Epstein declined to speculate whether these biases are the result of deliberate manipulation on the part of platform companies. “They could just be from neglect,” he said. However, he noted, “if you buy into this notion, which Google sells through its PR people, that a lot of these funny things that happen are organic, [that] it’s all driven by users, that’s complete and utter nonsense. I’ve been a programmer since I was 13 and I can tell you, you could build an algorithm that sends people to Alex Jones’s videos or away from Alex Jones’s videos. You can easily alter whatever your algorithm is doing to send people anywhere you want to send them. The bottom line is, there’s nothing really organic. Google has complete control over what they put in front of people’s eyes.”

A “Nielsen-type network” network of global monitoring, suggested Epstein, might provide a partial solution. Together with “prominent business people and academics on three continents,” he said, he has been working on developing such a system that would track the “ephemeral stimuli” used by digital platforms. By using such a system, he said, “we will make these companies accountable to the public. We will be able to report irregularities to authorities, to law enforcement, to regulators, antitrust investigators, as these various manipulations are occurring. We think long-term that is the solution to the problems we’re facing with these big tech companies.”

Source: ZeroHedge